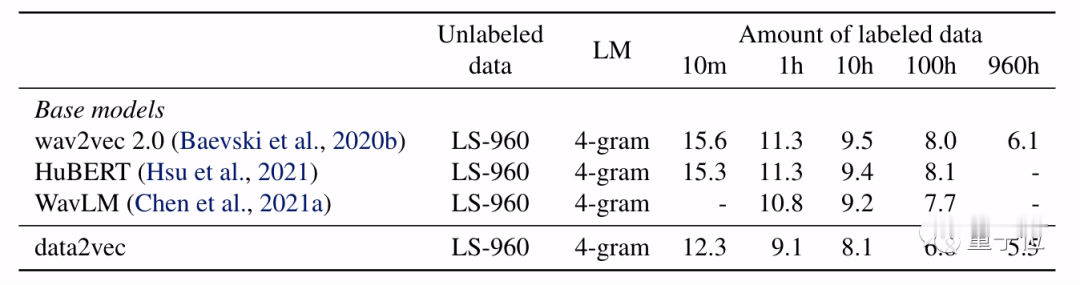

Instead of this data2vec directly predicts contextualized latent representations without quantization. Wav2vec 2.0 and HuBERT discretize representations in order to train the models. The teacher network is identical to the student model but with weights that are slightly out of date.įor example, in speech, the most popular SSL approaches are wav2vec 2.0 and HuBERT. The student model has to predict representations of the full input data even though it has a view of only some of the information. Then they mask part of the input and process it with a student network, which then predicts the latent representations of the teacher. This removes the dependence on modality-specific targets in the learning task.ĭata2vec uses a teacher network to first compute target representations from an image, a piece of text, or a speech utterance. By focusing on these representations - the layers of a neural network - instead of predicting visual tokens, words, or sounds, a single algorithm can work with completely different types of input. The core idea is to predict latent representations of the full input data based on a masked view of the input in a self-distillation setup using a standard Transformer architecture. Instead of predicting modality-specific targets such as words, visual tokens, or units of human speech which are local in nature, data2vec predicts contextualized latent representations that contain information from the entire input.ĭata2vec simplifies by training models to predict their own representations of the input data, regardless of the modality.

Data2vec is the first framework for SSL that works for different modalities. Research in Self-supervised Learning today is almost always focused on one particular modality. This approach has a lot of advantages for scaling especially in low data resource scenarios like ASR for different languages and dialects. Self-supervised Learning enables computers to learn just by observing and then figuring out the structure of images, speech, or text. It is the first SSL framework that uses the same learning method for either speech, NLP, or computer vision and achieves State-of-the-Art results. However, data2vec gets rid of needing to use different methods for different modalities.

This means there are big differences in the way self-supervised learning algorithms learn from images, speech, text, and other modalities.

Up until recently, the Self-supervised Learning Techniques (SSL) were developed with a single modality in mind. This week's Deep Learning Paper Review is data2vec: A General Framework for Self-supervised Learning in Speech, Vision, and Language. Deep Learning and AI Engineer at AssemblyAI

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed